As a digital photographer working in 2024, my practice necessitates making a decision about the use of image manipulation in post-production. Image manipulation or creation using generative artificial intelligence (AI) is currently controversial amongst photographers for a number of reasons, including the ethics of training AI and the effect of AI imagery on the value of more traditional work. It is not uncommon historically for a disruptive new technology to be met with resistance by a group of people skilled in an existing practice who stand to be affected by it, and looking at past examples of this could provide guidance for how to respond to the advent of generative AI.

A particularly relevant example is the idea of pictorial vs. ‘straight’ photography that came about as a result of early technological advances allowing for image manipulation, in particular darkroom dodging and burning techniques. Pictorial photography, as in that typical of the pictorialism movement of the late 19th and early 20th century, is typified by image manipulation (either in or out of camera) that distances the final look of a photograph from a faithful representation of the subject. Straight photography, by contrast, seeks to present a photograph as a true representation of a subject: sharp and in focus, a snapshot of a particular thing or place at a particular time.

Patricia D. Leighten compares, contrasts and comments on several critical opinions relating to the idea of straight vs. pictorial photography in her 1977 article Critical Attitudes toward Overtly Manipulated Photography in the 20th Century. Her use of the term “overtly manipulated” is the result of her rejection of a few other terms, such as ‘pictorial’, ‘subjective photography’ and simply ‘manipulated’ as unhelpful or inaccurate in describing image manipulation both in and out of camera. Leighten claims that “overtly manipulated photography announces the fact that it has been shaped by an imagination, that it is an artificial document, that it has purposely altered one’s expectation that the photograph is an accurate transcription of reality. […] In straight photography, there is a greater emphasis on subject matter itself; an illusion persists that all the information is contained in the object photographed[…]” (Leighten, 1977, p. 133).

The idea that a ‘straight’ photograph is a faithful representation of its subject matter is indeed, as Leighten puts it, an illusion. While this is more common knowledge now that digital manipulation methods such as Photoshop and especially generative AI are commonplace, it has arguably always been true.

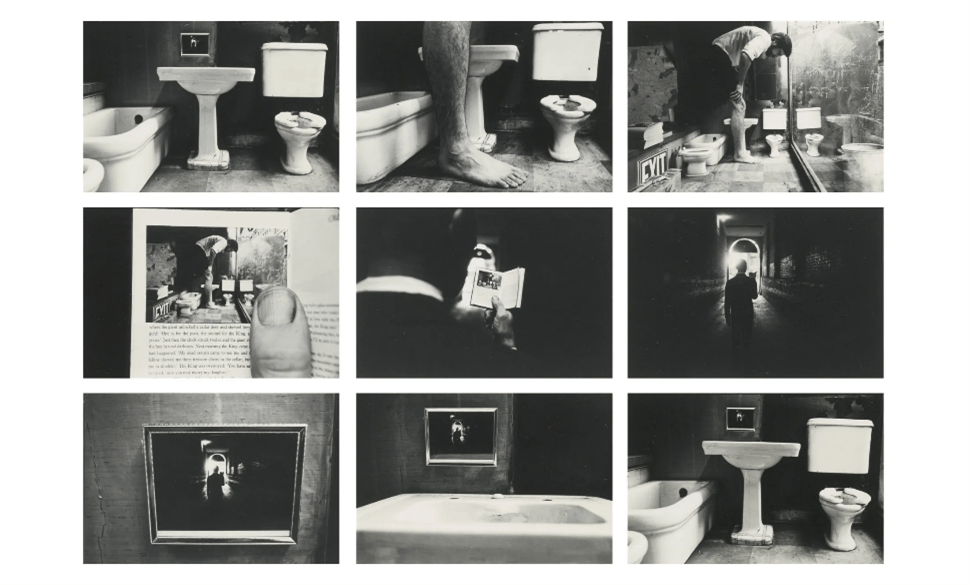

Leighten mentions two very different works while introducing her article, one of which is Duane Michals’ 1973 series Things Are Queer, a series of nine staged film photographs.

Things Are Queer is an example of a photographic work that is not just directorial, it actively draws attention to the fact that it’s a fabrication. The viewer is drawn in by the mundanity of the initial photo and then surprised when the scale is changed in an unnatural way. Through the remainder of the sequence, Michals’ continual zooming out and recontextualization pushes the work further and further from the apparently documentarian initial photo until by the time the viewer returns to it, it has taken on an entirely new meaning. Despite this, every photo appears to be directly out of camera and unmanipulated. The fiction of the scenario contrasts with its factual representation, and this juxtaposition could serve as a commentary on the directorial mode itself.

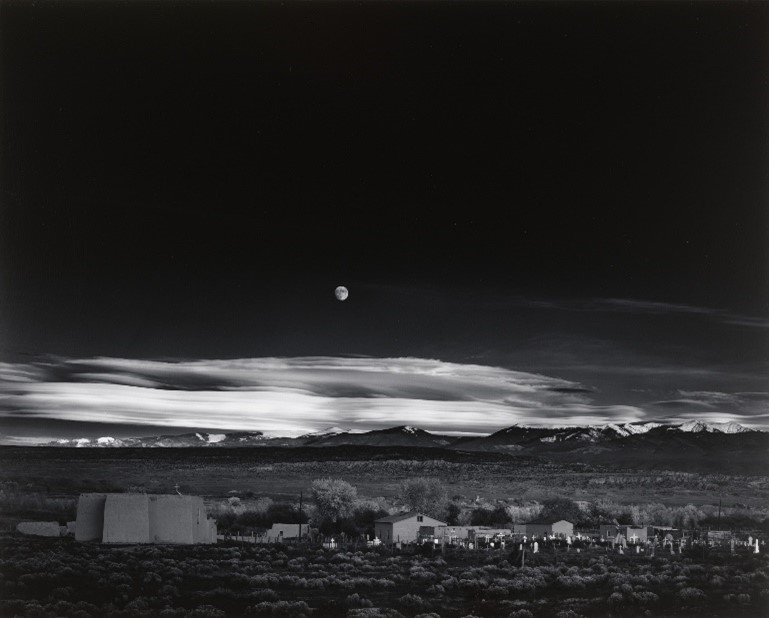

In stark contrast to Things Are Queer is Ansel Adams’ 1941 photograph Moonrise, Hernandez, New Mexico, which, as Leighten points out, is a textbook example of ‘pure photography’ (1976), succeeding in providing a faithful recreation of a particular place and time. Leighten erroneously describes Moonrise as being made with two exposures and uses this as an example of image manipulation. In reality, the photo was captured with a single exposure, though manipulation occurred out of camera. In 1948, sometime during the photo’s 1300-or-so prints, Adams “bathed part of the negative in a chemical intensifier in order to create more contrast in the foreground and to make the moon brighter.” (Grant, 2011).

Leighten’s point stands, that Moonrise has been subject to manipulation despite being “considered the very epitome of the ‘straight’ or ‘purist’ school of photography” (1977, p. 133).

When attempting to categorise both Things Are Queer and Moonrise, Hernandez, New Mexico as either straight or pictorial photography, many readings can be considered valid. Moonrise can be read as pictorial photography due to Adams’ post-processing, but his treatment could just as easily be considered an example of straight photography if its purpose was to overcome the technological limitations of his camera, portraying the final image as closely as possible to what Adams saw with his own eyes. Similarly, Things Are Queer could be called straight photography due to the lack of post-processing, but it could also be argued that Michals’ shot compositions and directorial decisions were simply ways to pictorialise the photographs in-camera.

As A. D. Coleman asserts in his article The Directorial Mode: Notes Toward a Definition, the decisions made by a photographer working in any mode influence the information relayed to the viewer and render the photograph a separate object from the place or events depicted therein (1976). Following this idea, we could argue that the ability of generative AI to modify the contents of a photograph is not a new problem, and therefore should not be a primary concern.

Coleman suggests that terms such as ‘documentary’ and ‘straight/pure’ are misleading, instead offering the terms ‘informational’ and ‘contemplative/representational’ respectively (1976), but I would go further to assert that a dependency upon binary categories for any form of qualitative assessment is inherently limiting. As demonstrated above, subjective readings of a work can prescribe it to an existing categorisation; indeed, pre-existing notions of rigid definitions could even direct the reading of a work altogether. It may therefore be more appropriate to use these sorts of categories as descriptive shortcuts rather than prescriptively.

As it stands, binary models of categorising art tend to arise where opinions clash, whether discourse concerns purpose, ethics, value or otherwise. Where emerging technologies or processes threaten to destabilise an established art form, these binary models often collapse, amalgamating into a unified ‘old’ against the ‘new’. As Chandler and Livingston explore in their article Reframing the Authentic: Photography, Mobile Technologies, and the Visual Language of Digital Imperfection, new movements in art are countered by returns to older methods in an attempt to reassert authenticity (2016). These counter-movements often drop older concerns and tacitly accept once contested conventions in the process of rejecting newer practices. This is apparent in responses to the advent of photography itself, digital photography, mobile photography, and now generative AI.

J. McCormack et. al discuss several cogent points in their 2014 article Ten Questions Concerning Generative Computer Art. In posing questions rather than trying to predict the future direction of generative art, they have provided more than just a snapshot of ten-year-old opinions on an emerging practice. Their philosophical concerns remain relevant today, particularly their discussion around the quantifiability of aesthetics. McCormack et. al suggest that human aesthetics can be formalised, “unless we think there is something uncomputable going on in the human brain” (2014, p. 136). Whether this is a truism depends on whether our definition of ‘uncomputable’ differs materially from ‘unquantifiable’; regardless, the current mechanism of generative AI does rely on an ability to quantify aesthetic elements. Despite advances in realism or convincingness of output, generative AI still produces examples of combinatorial creativity rather than emergent creativity, as McCormack et. al assert. Their claim that “getting symbols with new semantics to emerge within a running program is currently an open research problem” (2014, p. 138) remains true. Current generative AI is not only unable to produce compelling art unprompted except by chance, even when prompted the art it generates is an amalgamation of the material it was trained on. Of course, combinatorial creativity is not necessarily of lower value than emergent creativity, but for a generative AI to produce examples of emergent creativity, it would likely require a method of operation more closely approaching artificial general intelligence.

Reviewing McCormack et. al’s article reveals that modern generative AI does not necessarily differ as significantly from earlier generative processes as public opinion may suggest. The GAN (generative adversarial network) method of machine learning that runs popular generative AIs such as Midjourney and DALL-E is simply an advanced version of the same process that ran older AIs dating back to 2014 (Foote, 2024). The three primary differences between those models and our current ones are familiarity of output, accessibility, and source of training data. These differences are likely responsible for much of the negative artist sentiment surrounding the use of generative AI.

Familiarity of output and accessibility are two concerns that mirror earlier concerns about past disruptive photographic technologies, and I claim that by drawing these parallels, we can summarily dismiss them, at least from an art-value standpoint. Historical context suggests that while increasing the accessibility of an art form may result in a larger volume of lower value work, compelling art created by skilled artists will remain relevant and in demand. Likewise, just as the advent of photography shifted painting practice without replacing it, generative AI will likely have a similar effect on photographic practice as it becomes more able to output convincing and familiar material. There is certainly cause for panic if an artist wishes to remain relevant without responding to change, but such an artist should be panicked regardless.

The material on which generative AIs are trained remains a valid concern, and one that is harder to relate back to previous technological disruptions. Readymade art, collage and assemblage, while relevant practices, are more the result of philosophical shifts than technological disruptions, and remain as disputed as they likely ever will. I would suggest that the unauthorised use of existing art by generative AI developers, and whether this practice is any different to that of other creators, should be allowed to remain similarly disputed.

Firefly, Adobe’s generative AI, differs from many other generative AIs in two key ways that seek to make it more ethical: it is trained only on copyright-free or licensed material from Adobe Stock, and it participates in the Content Authenticity Initiative (CAI) by including metadata within its outputs that identify their point of origin (Coleman, 2024).

Australian street and travel photographer Jayson Robertson uses the Firefly-powered Generative Fill and Generative Remove tools in his works to remove people and objects in the background (Mani, 2024.) Robertson’s photographs are firmly examples of pictorial photography: their colour palettes are intentionally unnatural and, along with their symmetry, often also assisted by Firefly tools, push them into the realm of unreality. I consider Robertson’s work an acknowledgement that generative AI’s continued presence is inevitable. He does not attempt to hide his image manipulation, but rather makes it a focal point of his work. Supporting this, Robertson talks openly about his process, mentioning that “some street photographers still spurn AI, and I’ll admit using Firefly felt like cheating at first. But then I remembered the endgame, which is to create beautiful content that speaks to my audience. Any technology that helps me do that is a welcome addition to my toolbox.” (as cited in Mani, 2024.)

Robertson’s use of generative AI to modify existing photos rather than generate entirely new images is a good example of what I predict will be the predominant use case of AI in photography in the near future. Even as generative AI becomes more adept at representing reality, the primary purpose of photography to capture real-world visual data will mean that starting from a real photograph will continue to make the most sense. Fully AI-generated images are already becoming more prevalent in other forms of art that rely more on creating from imagination, such as illustration, and we should expect this to continue. Until generative AI can create emergently, it is important to remember that works of artistic value can only be intentionally produced through an artist interfacing with generative AI as a tool, and the more granular a level of control the artist needs, the more skilled work they must do by themselves. Our own responses to modern art movements already suggest that conceptual skill can be just as important as manual execution, and this idea applies equally to art created using generative AI.

Examining history, we can see an enduring cycle of change in the art world as new technologies arise, disrupt existing practices, are initially rejected, and eventually become commonplace and accepted. These changes can have far-reaching effects, but attempts to supress them consistently prove to be ineffective, with many prominent art movements arising from rebellion against suppression. Image manipulation is not a new concern, and if generative AI is just the latest in this series of developments, it may be that artists should learn from history by responding to change open-mindedly.

References

Coleman, A. D. (1976). The directorial mode: notes toward a definition. Artforum, 15(1), 55-60.

Coleman, K. (2024). Adobe Firefly: everything you need to know about the AI image and video generator. Creative Bloq. https://www.creativebloq.com/features/everything-you-need-to-know-about-adobe-firefly

Foote, K. D. (2024). A brief history of generative AI. Dataversity. https://www.dataversity.net/a-brief-history-of-generative-ai/

Grant, D. (2011). The market for Ansel Adams and Moonrise, Hernandez, New Mexico. Artnet. https://www.artnet.com/magazineus/features/grant/ansel-adams-moonrise-hernandez-8-31-11.asp

Leighten, P. D. (1977). Critical attitudes toward overtly manipulated photography in the 20th century. Art Journal, 37(2), 133-138.

McCormack, J., Bown, O., Dorin, A., McCabe, J., Monro, G., & Whitelaw, M. (2014). Ten questions concerning generative computer art. Leonardo, 47(2), 135–141.

Mani, R. (2024). Jayson Robertson takes generative AI to the street. Adobe Blog. https://blog.adobe.com/en/publish/2024/02/01/jayson-robertson-takes-generative-ai-to-street